The era of the $20-per-month "AI tax" is dying. While American labs are burning billions to eke out marginal gains, DeepSeek just dropped a preview of its V4 model that basically does the same thing for the price of a gumball. It's not just a minor update. We're looking at a 1.6-trillion-parameter beast that handles a million tokens of context without breaking a sweat or your bank account.

Honestly, the most shocking part isn't the scale—it's the efficiency. Most frontier models are bloated. They require massive server farms just to answer a simple coding question. DeepSeek V4 uses a Mixture-of-Experts (MoE) architecture that only activates about 49 billion parameters at a time. It's like having a library with a trillion books but only needing a small, expert team to find exactly what you need.

Why the 1 Million Token Context Actually Matters

Most people think a long context window is just for "chatting longer." That's wrong. A million tokens is enough to swallow 20 full-length novels or a medium-sized codebase of 500+ files in one go. If you're a developer, you aren't just pasting a single function anymore; you're feeding the model your entire repository.

The "lost in the middle" problem usually plagues long-context models. You give them a massive document, and they forget what happened on page 300. DeepSeek claims to have fixed this with something called Engram Conditional Memory. In internal "Needle-in-a-Haystack" tests, the model hit 97% accuracy at the million-token scale. For comparison, most standard attention mechanisms start hallucinating wildly once you cross the 128k mark.

This matters for legal discovery, medical research, and complex engineering. You can ask a question about a specific contradiction between a contract's preamble and its 40th annex, and V4 won't just guess. It actually finds it.

The Brutal Economics of V4 vs the West

Let's talk about the money. AI is expensive because training is expensive. But DeepSeek is proving that you don't need a hundred-billion-dollar "Stargate" supercomputer to compete.

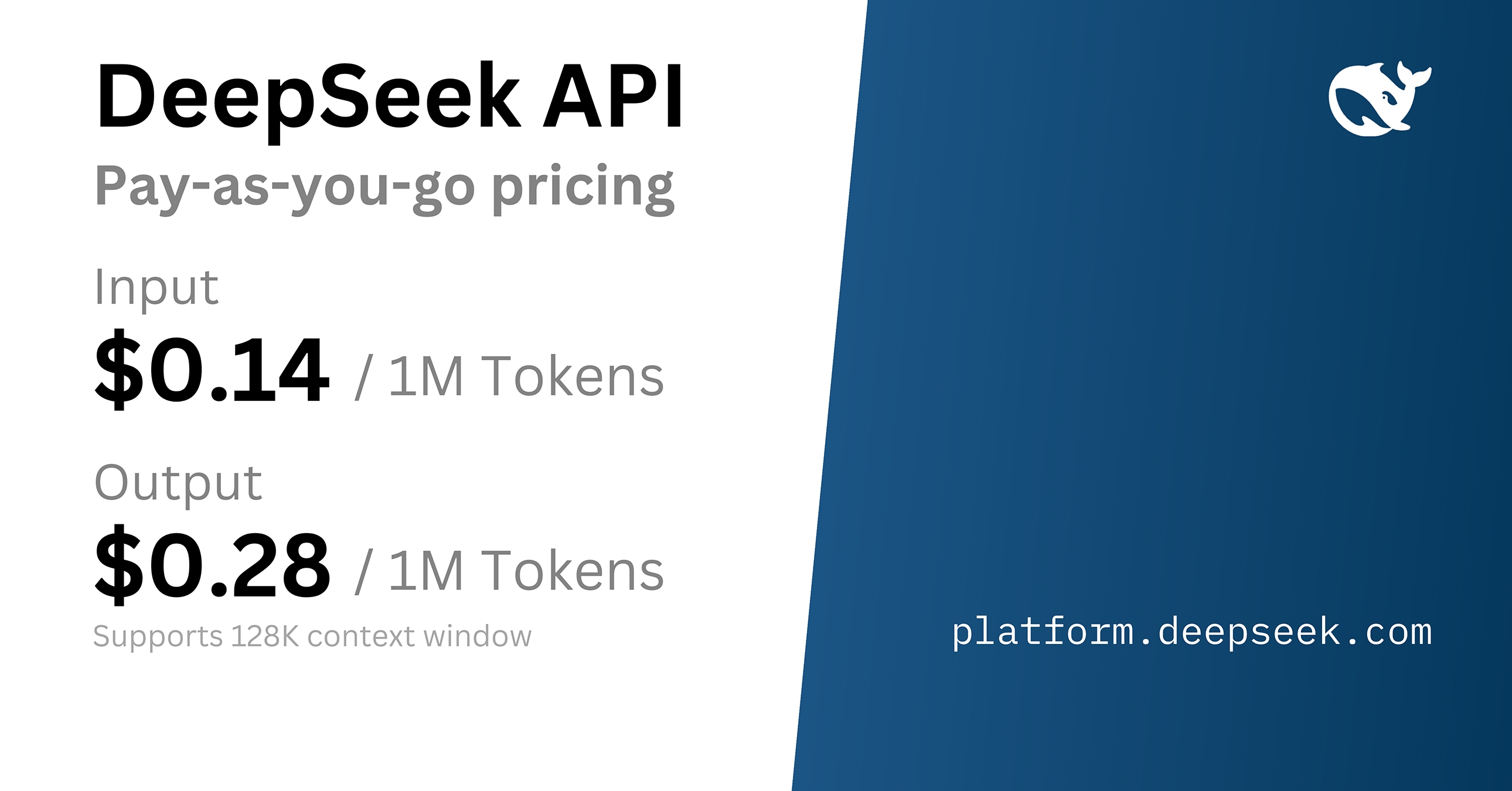

- API Pricing: The Flash variant costs as little as $0.028 per million tokens (with a cache hit). Even the high-end Pro variant is priced at $1.74 for a cache miss.

- Comparison: If you're running heavy workloads on Claude Opus 4.6 or GPT-5.2, you're likely paying 10 to 20 times more for similar reasoning capabilities.

DeepSeek is backed by High-Flyer Capital Management, and they've figured out how to optimize for hardware that isn't just Nvidia's latest H100s. They're using Huawei Ascend 910C chips and Cambricon accelerators. While Washington tries to choke off China's access to high-end silicon, DeepSeek is building models that run circles around Western counterparts on "inferior" hardware. It's an engineering flex that should make Silicon Valley very nervous.

Coding Performance That Rivals the Giants

If you use AI to code, these numbers should get your attention. Leaked benchmarks show V4 hitting around 90% on HumanEval and over 80% on SWE-bench Verified.

- HumanEval: 90% (DeepSeek V4) vs ~88% (Claude Opus 4.6)

- SWE-bench: 80%+ (DeepSeek V4) vs 81% (Claude Opus 4.6)

These aren't just "good for an open-source model" scores. These are "best in the world" scores. SWE-bench is particularly tough because it requires the model to solve real-world GitHub issues. It has to read the code, find the bug, write a patch, and pass tests. V4's ability to hold an entire repo in its million-token context is likely why it's crushing these benchmarks.

Native Multimodal is the New Baseline

DeepSeek V4 isn't just a text box. It was trained from day one to understand images and video natively. Most older models use a "late-fusion" approach—they have a separate vision "eye" bolted onto a text "brain." It works, but it's clunky and prone to losing context.

Because V4 is natively multimodal, the reasoning is more consistent. If you show it a video of a complex mechanical repair, it isn't just describing the frames. It's applying its core reasoning logic to the visual data. This leads to fewer hallucinations and lower latency. You can feed it scanned invoices, architectural blueprints, or even screen recordings of software bugs, and it treats that data with the same depth as a text prompt.

Can You Actually Run This Yourself?

The short answer is yes, but you'll need some serious gear. DeepSeek has a history of being open-weights, and V4 is expected to follow suit with an Apache 2.0 license.

To run the full Pro version locally? Forget it. You'd need a cluster. But for the quantized versions, the requirements are surprisingly "prosumer":

- INT8 Quantization: Requires about 48GB of VRAM. That's two RTX 4090s linked together.

- INT4 Quantization: Might squeeze onto a single RTX 5090 (32GB VRAM).

This accessibility is a nightmare for closed-source providers. When a company can host a frontier-level model on a single high-end workstation instead of paying six figures a month in API fees, the leverage shifts entirely to the user.

The Catch Everyone Ignores

Don't get too excited yet. There are three big caveats you need to keep in mind before you port your entire tech stack over to DeepSeek.

First, the benchmarks are mostly internal. DeepSeek is famous for its transparency, but until third-party labs verify that 90% HumanEval score, take it with a grain of salt. Companies often "over-train" on benchmark data to look better than they are in real-world usage.

Second, there's the geopolitical mess. The White House is already making noise about IP theft and security risks. If you're a US-based defense contractor or a highly regulated financial firm, using a model from a company backed by Chinese capital might be a non-starter, regardless of how cheap it is.

Third, native video generation and analysis are still in their infancy. While DeepSeek claims native multimodal support, we haven't seen it handle long-form video with the same precision it brings to text. The "lost in the middle" problem for video is an entirely different beast that hasn't been solved yet.

Getting Started with the Preview

If you want to test this today, don't wait for the final release. The preview is live on the DeepSeek website.

- Instant Mode: Good for quick tasks and general chat.

- Expert Mode: This is where you get the full 1.6T parameter reasoning. Use this for coding and complex math.

- API: If you're a dev, update your endpoints. The API is already supporting the 1M context length.

Switch your heaviest coding tasks to the V4 Pro preview for a week. Compare the logic to whatever you're currently paying for. If it matches the quality, you've just found a way to cut your AI overhead by 90%. That's not just a technical win; it's a massive competitive advantage. Stop overpaying for "brand name" AI when the raw performance is now a commodity.